Speculation: How Much Should Information Morally Matter?

A brief reply to Bentham

A key problem many people see in the kind of expansive total-welfare utilitarianism advocated by Substack’s Shrimp Team, in particular their generalissimo Bentham's Bulldog, is that it seems uniquely sensitive to new information. I pointed this out recently:

And Bentham's Bulldog replied:

Here I want to outline very briefly why I think my view makes sense. Unfortunately to do it I may have to entertain a few hypotheticals (ick) but I will try to keep them somewhat plausible.

Consider six ethical rules, which I have given shorthand names:

The good involves doing that which subjectively seems good based on your intuitions, primarily to those who are genetically similar to you. (Subjective Kinism)

The good involves doing to those who are genetically similar to you, those things which they regard as good for themselves. (Empathetic Kinism)

The good involves doing to those who are genetically similar to you, those things which in actual fact minimize the number of nociceptors which are activated to create the sensation of pain. (Objective Kinism)

The good involves doing that which subjectively seems good based on your intuitions, to all entities capable of experiencing good. (Subjective Universalism)

The good involves doing to all entities capable of experiencing good, those things which they regard as good for themselves. (Empathetic Universalism)

The good involves doing to tall entities capable of experiencing good, those things which in actual fact minimize the number of nociceptors which are activated to create the sensation of pain. (Objective Universalism)

Now, imagine 4 plausible information shocks:

New study shows that insects have 3x as many nociceptors as previously believed.

Why does this matter? Well, more nociceptors=more pain, so if insects have a lot more nociceptors, then they have a lot more pain, and so ethical systems that value insect pain will be impacted.

New study shows that shrimp population growth is not impacted by addition of pain-causing substances to their water supply

Evolution rewards successful reproduction through positive feedback. In humans the most obvious example is orgasm, but many of our other highest-bliss moments are basically evolution giving us a pat on the back for successfully propagating genes. Thus, to the extent beings reproduce at or above their species-historic rate of reproduction, we can infer evolution is giving them hedonic rewards, whereas species reproducing below their historic norm are likely lacking such rewards.

Your brother tells you that he has suffered a traumatic brain injury and that certain smells trigger significant though episodic pain for him

Some ethical systems place greater priorities on your brother’s subjective self-reports than others, and some ethical systems prioritize near-kin more than others.

Astronomers discover a comet the size of a small moon, it will directly impact earth in 70 million years, creating a pretty “hard” limit on Earth-based life far shorter than the 300-700 million year limit set by the sun’s exhaustion of its hydrogen.

Some ethical systems expect you to prioritize all life, whereas others expect you to prioritize certain types of life. Some kind of life is likely to persist on earth for a very long time, but it may not be genetically similar to modern humans: 70 million years ago the largest mammal on earth was about a 16-inch long rat. In 70 million years, it’s not clear anything genetically like humans will be on earth. But other life will be.

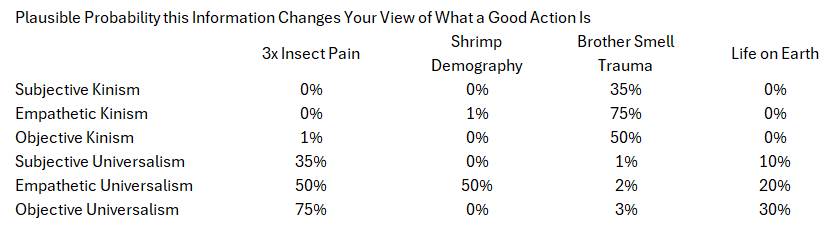

Here’s a cute little chart of how much I think each moral system is influenced by each piece of new information:

So for example: objective kinism might care a bit about insect pain! Virtually all multicellular life on Earth shares like 20%+ of its DNA. Ants are about 20-40%, shrimp about 50-70%. Of course, that’s mostly DNA we don’t “care” much about for various reasons. If we use genealogical distance, the last common ancestor of insects and humans was some kind of worm maybe 570 million years ago, or so we think. So objective kinists might look at that and say “Hey, insects actually are a tiny bit genetically like us! We should care somewhat about their pain!”

But subjective and empathetic kinists won’t care much, because they’re unlikely to think insects have subjective experiences sufficiently like their own to make anything of a change in estimated nociceptors.

Universalists, meanwhile, are very impacted! Subjective universalists may not revise as much as empathetic or objective universalists because they may have greater variance in prior beliefs about insect pain, and because their intuitions are not formally bound to new information. Empathetic universalists should be more influenced, because they care about the insect’s own intuitions, not their own, and new information about insect nociception could inform that. And objective universalists should be very impacted since their whole thing is suppressing cosmos-wide negative nociception as much as possible.

On shrimp demography in response to pain, it’s interesting that I think only empathetic groups care. Subjectivists don’t care: their own intuition is that pain is bad, so pain is bad. Objectivists also don’t care: negative nociception is bad even if the species itself doesn’t seem to act like it’s bad on a credible metric. Only the empathetic groups respond: they observe that inflicting shrimp pain doesn’t seem to change the core evolutionary reward function for shrimp, and thus infer interventions to inflict shrimp pain may not be worth much to shrimp. (note: I have no idea how shrimp reproduction actually responds to interventions of this or other types, except that cutting off shrimp eyeballs apparently does increase shrimp reproduction in captivity, though even that seems a bit debated)

On brother-small-trauma, kinists care a lot. Empathetic kinists care the most, I think? Regardless, it’s your brother. He shares a ton of your DNA. It’s extremely wrong to inflict even episodic harms on him of a kind he and you and anybody would disprefer. Universalists, however, are in a different boat. Your brother is a very small share of cosmos-wide experience. Your brother informing you of his new condition has basically no effect on which actions in life are best and which are worst; it probably doesn’t impact the rank order of the top 1,000 possible options or worst 1,000 possible options for action. I’m allowing some effect to account for universalists making efficiency judgments of various kinds, but in principle your brother is just not an important part of cosmos-wide wellbeing.

And finally, the asteroid. Kinists don’t care about asteroids at all. The odds anything 70 million years from now resembles them genetically enough to care about are basically zero.

But universalists care a lot! X-risk management against asteroids became a lot more important, because most of the remaining total utility of the earth occurs after the 70 million date but before the expiration of the sun. Thus, preventing that comet strike is literally the first-best-thing anybody could ever do, because it will save an absolutely insane amount of future life.

UNLESS! Twist: unless that future life is net negative, as Bentham's Bulldog believes of insects. In that case, the best action is to ensure that an asteroid strike wipes out all life the moment the last net-positive life ends.

Okay, so, what’s the point of all this?

Well, first, not all ethical systems are equally information-sensitive. Because universalist systems have a vastly wider range of concerns than our hypothetical kinist systems, they require more information to truly know what is good. Because objective systems require actual facts about nociception whereas subjective systems just require you to inspect your own intuitions (more or less), they have massively different information requirements.

Moreover, the kinds of information objective and empathetic universalism requires are extremely difficult to acquire and interpret: the qualia of bacteria, whether plants have experiences, what it is like to be a fruit bat, etc. For universalism, nociception is just the start: you then have to figure out if all neural systems render nociception as pain. Which means you get right into qualia even without the requirement of empathetic judgments.

Moreoever, the factual case is that advocates of these positions have a huge problem: it’s really non-obvious if the most numerous lives on earth (insects and such) are net positive or net negative! Bentham's Bulldog thinks net-negative, but plenty of people disagree (FWIW I disagree; I think almost all species probably subjectively value their own lives positively on average at least if they are reproducing). The fact that there is such a huge uncertainty band around the most important ethical question, and that there also doesn’t seem to be an obvious pathway to resolving it (no number of Substack posts will substitute for counting the nociceptors in every species of grasshopper or doing a complete hormonal workup of bumblebee life courses, multiplied across 8.7 million different species on earth!), is a huge problem! And yes, a symptom of that problem is that a single new study could mean the difference between Bentham's Bulldog being morally required to advocate spraying broad-spectrum insecticides on every inch of the Amazon, and him being morally required to advocate the complete prohibition of all insecticides everywhere on earth, which would cause pretty rapid human population decline due to starvation after crop failures (and, interestingly, heavier use of animal grazing).

So when people point out that information causes massive updates, we are not making some stupid point saying information should never matter. We are pointing out that the kinds of arguments Bentham's Bulldog and the others in that camp advocate are uniquely information-hungry and uniquely sensitive to new information… and in fact to new information generated in studies which are unlikely to be replicated or reproduced, and likely to be highly appealing to the most motivated, activist-y scholars least likely to apply appropriate rigor (note that there are already published replication failures in the shrimp pain literature, which ReThink and other assessments scrupulously ignore).

And finally… Bentham's Bulldog is talking out of both sides of his mouth here. He (I assume approvingly) quoted this quote from Astral Codex Ten :

The article he’s quoting is I think a really fine article, I enjoyed it too. And Scott there is explicitly pointing out that a rational being should not have a moral system which is so oversensitive to small amounts of new input. He focuses on emotions here, but the emotions are just responses to information: anecdotes about wartime suffering. Scott’s point is that it is a bad thing for moral judgments to be highly sensitive to the latest 0.5% of information!

That doesn’t mean they should be totally insensitive. But simply that the degree of sensitivity is an important metric and it is bad when it is very high. I would argue moral systems totally insensitive to new information are also bad. “Subjective Kinism” seems quite bad to me, for example! “Moral conduct is just doing whatever I feel advances my genes” is pretty obviously a super bad view of what counts as moral conduct, and one highly insensitive to a broad range of kinds of information.

I enjoy dunking on the shrimpists, they enjoy dunking on me, we all benefit from the engagement, and like insects breeding because of evolutionary reward signals, we keep doing it because of the reward signals. But amidst the cloud of Substack-gasms arising from engagement due to entertaining public quasi-beef, I hope the shrimpists will actually reflect a bit more. You are making sweeping moral judgments based on virtually zero empirical basis. Many of the judgments you make about shrimp are based on studies of non-shrimp species separated from shrimp by hundreds of millions of years of evolution: shrimp and crayfish diverged 450 million years ago! They are less related than humans and shrimp! Mantis shrimp and tiger shrimp diverged 400 million years ago! The two most commonly farmed shrimp (Litopenaeus vannamei and Penaeus monodon) diverged 20-45 million years ago.

Humans and chimpanzees diverged 6 to 9 million years ago. Granted, humans and chimpanzees have higher genetic variance than vannamei and monodon do, but that’s because vannamei and monodon have enormous amounts of repetitive genetic sequences (for science reasons): vannamei and monodon are likely more dissimilar than humans and chimps once you drop the 30-60% of their genomes which are just copy-pasta of other parts of the genome.

That is to say, you can’t just say “Shrimp have trait X” or “Insects have trait X.” Culinarily or agronomically or even behaviorally similar species may have massively different actual experiences and lives. If the shrimpists spent less time wasted on repetitive philosophical arguments bereft of data, and more time doing the empirical labwork necessary to convince rational people that these animals suffer meaningfully, their cause would be better served. It’s literally a standing joke in science when a STUDY FINDS and it turns out it is… “in mice.” The share of “in mice” studies which work “in humans” is not very high!